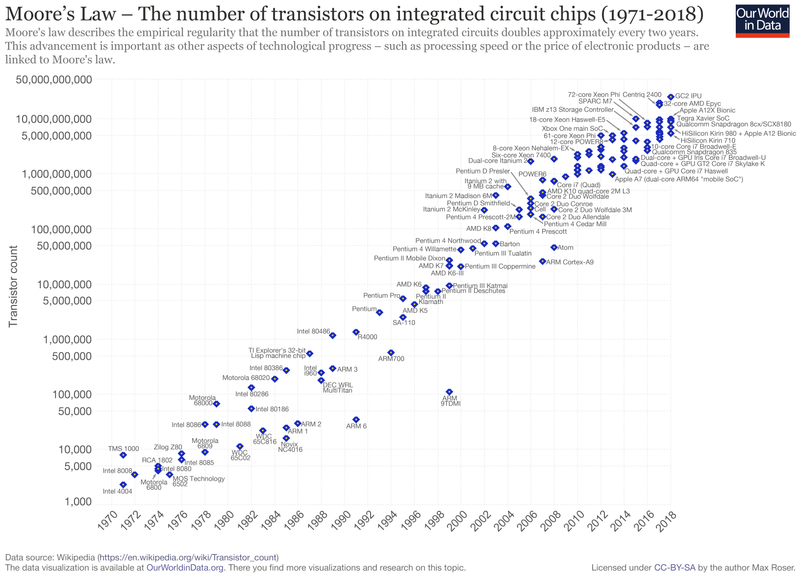

In 1975, Gordan Moore was asked to write for a special Edition of Electronic Magazine about the future of silicon components during the next decade. Integrated circuits (we explain what these are below), known today as computer chips, were discovered in the late 1950s and had just begun being tested. When analysing the achievements made by his company, Intel, and others in the previous years, Moore observed that the number of transistors per microprocessor, as well as other electronic components, had doubled each year, and he __projected that this rate of growth would continue into the future. This prediction became known as Moore’s Law, which states that the number of transistors in an integrated circuit doubles about every 2 years.

Moore’s main goal was to transmit the idea that integrated circuits would lower the costs of technology: the larger the number of components, the lower the cost per component, therefore decreasing the price of computers and other electronic devices. According to the law, which became an industry goal and consequently a self-fulfilling prophecy, processor speeds would increase exponentially, because transistors would scale down so that more units could be packed together on a computer chip. The more transistors, the easier and quicker electrons could move between them, therefore increasing a computer’s efficiency and speed. So as the number of transistors on an integrated circuit has doubled every 24 months, computing power has doubled about every 18 months.

Moore’s Law was regarded as a «Rule of Thumb», rather than a Law: the technological industry intended to keep up with its growth rate and so settled a road map based on the continuous innovation of transistors and chips in line with Moore’s Law. Because of the increasing demand for devices, manufacturers and producers strive to innovate and create next-generation chips, less they become obsolete in the face of innovating competition.

Many devices that we use nowadays owe their existence to the evolution of integrated circuits; Moore himself stated that «Integrated circuits will lead to such wonders as […] personal communications equipment», currently known as mobile phones. Our laptops and electronic wristwatches, medical imaging and digital processing technologies were made possible because of Moore’s Law. In fact, it has been argued that Moore’s Law is one of the main drivers of the economic growth seen in the last 50 years, as it has led to tremendous gains in productivity.

However, over the past decade the pace innovation has slowed down, with Moore himself predicting the end of his law by 2025. To explain why, here is a small primer on transistors:

A transistor, while a simple invention in concept, is one of the foundations of our modern technological society. Without it, you would not be reading this article. Your phone probably has more than 1 Billion transistors, which are microscopic and manufactured with incredible precision on a thin wafer of silicon. A transistor is like a switch, or gate, that either blocks or lets a small current of electrons pass through it. It’s the billions of combinations of these gates opening and closing that permits a computer to perform all its tasks from basic arithmetic calculations to displaying this text on your screen.

Transistors today are around 10 to 20 nanometres. That’s only around 100 times larger than an oxygen molecule so small that engineers have run into problems that they cannot economically fix – quantum tunnelling. At sizes so small, the laws of quantum mechanics take hold and electrons start to obey different rules, where sometimes they simply cross a closed transistor, corrupting the data in the process. This problem has no economical solution now and so we have reached the death of Moore’s Law.

The end of Moore’s Law has long been considered an inevitability. Gordon Moore himself set a conservative timeframe in his original 1965 paper, estimating that his observed rule would remain “constant for at least ten years”. And its death has been proclaimed many times since. Only the ingenuity of the industry has kept it alive for so long.

But this endgame does not signify an end for the advancement of computational power. Several alternative possibilities lie on the horizon, and these range from the simple and intuitive to the fantastical possibilities brought by the advent of quantum computing.

On the intuitive side, we could focus on creating specialized chips for certain tasks. The processor that powers your device (smartphone or computer) is a “beefy” unit, capable of undertaking a wide variety of tasks, albeit at the cost of efficiency. For very specific tasks, specialized chips may be created. Other solutions may lay anywhere, from finding other materials to creating improvements in software to take advantage of current architecture – did you know that Excel does not take advantage of the extra cores in your computer to handle those heavier, 20.000 row tasks? Although these types of improvements can still take us a long way, at least a forty-fold increase in computing power (around 5 years of innovation), they can only take us so far. When it comes to transitioning to other materials, Graphene has been touted as a possible replacement, but the research is still in the early stages.

On the more futuristic side, quantum computing could usher a new age of technology to rival that of the integrated circuit. Last year, Google published a paper on Nature claiming to have solved a task in under 4 minutes that would have taken a modern supercomputer, the processing equivalent to “around 100.000 desktop computers”, over 10.000 years. There are also studies looking into conceptually abstract possibilities like using DNA to perform arithmetic and logic operations, as well as storage.

Although we will probably never have personal quantum or “DNA” computers, because their upkeep costs and upfront investment are prohibitively high, a world in which a handful of companies offer processing solutions to anyone via cloud computing, much like we see today, sounds plausible.

What impact could the end of Moore’s Law have on the economy? How can we bring about the age of AI if we do not have the hardware to support software innovation? We can only wait and see what happens.

Sources: Intel, Washington Post, Nature, 311 Institute, MIT Technological Review, NY Times, Wikipedia