“After full experience of the insufficiency of the existing federal government, you are invited to deliberate upon a New Constitution for the United States of America. The subject speaks its own importance; comprehending in its consequences, nothing less than the existence of the UNION, the safety and welfare of the parts of which it is composed, the fate of an empire, in many

respects, the most interesting in the world.”

Following the American Revolutionary War, and the drafting and ratification of the Articles of Confederation by the 13 states, it soon became obvious that the young confederate government was severely hindered in its functioning by an overall lack of power. Indeed, without an executive or judicial branch, the new government lacked the power and authority to tax, for example. Since it could only request money from states but didn’t have any ability to enforce these requests, both the government and the U.S. army were majorly underfunded.

It was, therefore, to evaluate and, possibly, amend the Articles of Confederation and improve the current situation that delegates from the 13 states gathered in Philadelphia, in 1787, in what was called the Philadelphia (or Constitutional) Convention. Even though a new constitution was drafted and signed in this convention, it was not with this goal in mind that these delegates joined in assembly. However, since many were convinced of the inadequacy of the current system, the convention soon evolved into an effort to redesign and rebuild the whole political structure of the union from a loose confederacy into a more solidly cemented federal union.

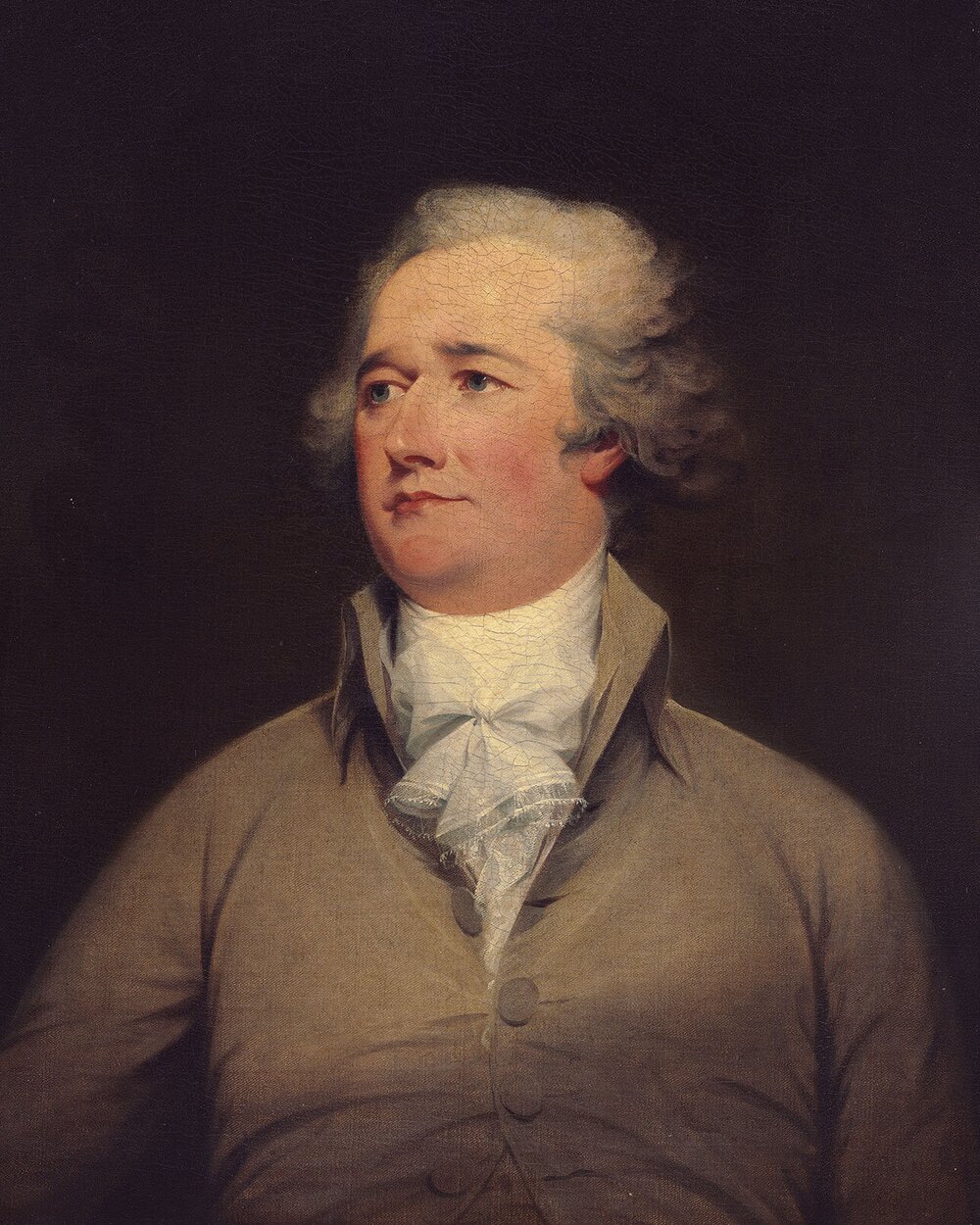

However, the drafting of the new constitution and its signing in the convention was only the first step. Next, and most critically, to enter into force, the new Constitution needed to be ratified by 9 of the 13 states. It was to lobby votes in favor of ratification that Alexander Hamilton, one of the convention delegates from the state of New York and the 1st Secretary of Treasury of the United States of the future government, wrote, along with James Madison, one of the most central figures in the drafting of the new Constitution and the Bill of Rights and future president of the U.S., and with John Jay, future 1st Chief Justice of the Supreme Court of the new government, a series of essays whose collection is referred to as The Federalist Papers.

Alexander Hamilton

The Federalist Papers

James Madison

These essays, 85 in total (1), were published as serial installments in newspapers and discussed topics ranging from the benefits of a federal union under the Constitution on matters of war and taxation to the discussion of the principles of separation of powers, how it is upheld by the Constitution and how the system of checks and balances between the three branches of government works under the Constitution, all the while attempting to refute many of the anti-ratification arguments of the time.

Although their effect in promoting the ratification of the Constitution is unverifiable, they certainly are a window into the political and historical framing of the federalists vs anti-federalists debates of the time and can prove useful in understanding some of the debates and arguments employed with regards to federalism in the European Union.

In Federalist No.11, Hamilton talks about the advantages of a common commerce policy, as achievable by federalization with, for example, the ban on inter-state tariffs, echoing many of the free-trade ideas that helped create and develop today’s European Union’s common market.

In Federalist No.30, Hamilton describes the poor situation of the government’s revenues under the Articles of Confederation and argues, namely, that the state of public debt of such a government will be extremely precarious. Indeed, while talking about the future creditors of the government he says:

“to depend upon a government, that must itself depend upon thirteen other governments, for the means of fulfilling its contracts, (…) would require a degree of credulity, not often to be met with in the pecuniary transactions of mankind”

The solution to such a problem, he argued, lied in giving the new Congress the general power to tax and levy tariffs.

However, federal revenues were mainly dependent on tariffs until the beginning of the 20th century, before the creation of the income tax (2). As this new tax was being levied and grew in size, federal fiscal policy also grew in scope, with the creation of the New Deal during the Great Depression, for example.

Both Hamilton’s arguments at the time for a more energetic government, empowered by the power to tax, and the expansion of the scope of federal fiscal policy after the Great Depression timed with the creation of the income tax provide insights into the current discussions on the expansion of centralized fiscal responses by the European Union.

Indeed, for the central institutions of the European Union to be able to provide a more timely and powerful response to a crisis such as the present one, they must also be able to access bigger sources of revenues.

If we want more powerful central institutions in the EU their budgets must also increase.

In 2017, EU budget expenditures were about €137,000 million. These paled in comparison to the U.S federal government’s almost $4,000,000 million in outlays. In Europe, where countries’ governments are already very fiscally active, it is hard to imagine a scenario where an increase of the central EU budget to levels more comparable to those of the U.S. federal government would not come at the cost of shrinking national government’s budgets.

Whether a more centralized response by the EU would, then, be net-beneficial is not something I’m arguing for or against. Indeed, the question that I desire to pose is whether this response, at the expense of member-states’ fiscal power, is politically achievable. Such a question is impossible to definitively answer. On one hand, emergency situations, like the Great Depression in the U.S., seem to be breeding grounds for centralization, on the other, the shifting political landscape in Europe, namely with the rise of Euro-skeptic parties, may foresee a grimmer fate for European federalism.

(1) You can find The Federalist Papers at: https://www.congress.gov/resources/display/content/The+Federalist+Papers or listen to public domain recordings of it by LibriVox at: https://librivox.org/the-federalist-papers-by-alexander-hamilton-john-jay-and-james-madison/

(2)- Even though Clause 1 of Section 8 of Article 1 of the U.S. Constitution gave Congress the ability to levy taxes it was only with the creation of the 16th Amendment to the U.S. Constitution that Congress was able to levy country-wide income taxes.